System hardening is the practice of securing a computer system by minimising its attack surface. Measures used can include the uninstallation of unneeded or unused software, especially those which run a network service, and the changing of various system or application settings from flexible default values to more secure values. This blog post investigates what hardening is meant to deliver, how it can be achieved, and the potential drawbacks or considerations that need to be kept in mind.

What is “hardening”?

The concept of hardening, or target hardening to give it its full name or when referring to it out of context, originates in a concept that is used in the military and security services to refer to strengthening the security of a building or other physical installation. It would often include measures such as modifications to the building itself (such as upgraded doors and windows) as well as environmental alteration such as removing bushes or other ground cover that could offer hiding places or screened approach to the installation, as well as adding or improving gates, fences, or other barriers.

What is the purpose of “hardening”?

The idea behind the concept of hardening can serve many purposes, including:

• Providing a visibly strong defense that will deter attackers from making an attack against the target, in the knowledge that it may be expensive, time-consuming, or ultimately fruitless.

• Keeping adversaries at a distance; or

• protecting the target in the event of some form of attack by making it more resistant to various attack techniques, especially those involving brute force.

How does hardening relate to information security?

Although the techniques and materials are different in modern security environments, the same approach to hardening physical installations is in fact very common in the realm of physical security when designing modern real-world compute facilities such as data centres. The same principles were used for centuries in building castles with strong walls, and surrounding structures such as moats, ditches, fences and walls are all adopted still, albeit with different materials and technologies.

However, in this article we are not going to look at physical security and how hardening applies to data centres, but rather how analogous techniques can be used at the software level to improve the security of web applications and servers against electronic forms of attack. This form of server hardening is variously described as security auditing, compliance testing or system hardening.

Why does hardening apply to web application security?

Various factors combine to make web servers and web applications appealing targets for criminals and others: they can be accessed trivially across the internet from anywhere in the world, often anonymously; the attacker can remain safely distant in a different country that may provide both anonymity and immunity from prosecution; and the compromise of a web application server can often be lucrative to criminals in numerous ways, whether by permitting the extortion of money via ransomware, or the exfiltration (theft) of valuable data such as credit card numbers.

Those running web application servers and those trying to attack them are therefore in a constant arms race, and it pays dividends for anyone running a web application service to be able to better protect their organisation and its customers by ensuring that, as much as possible, measures are taken to deter attackers or prevent successful attacks against the web servers and their applications that they are responsible for.

How is hardening web servers and other computer systems achieved in principle?

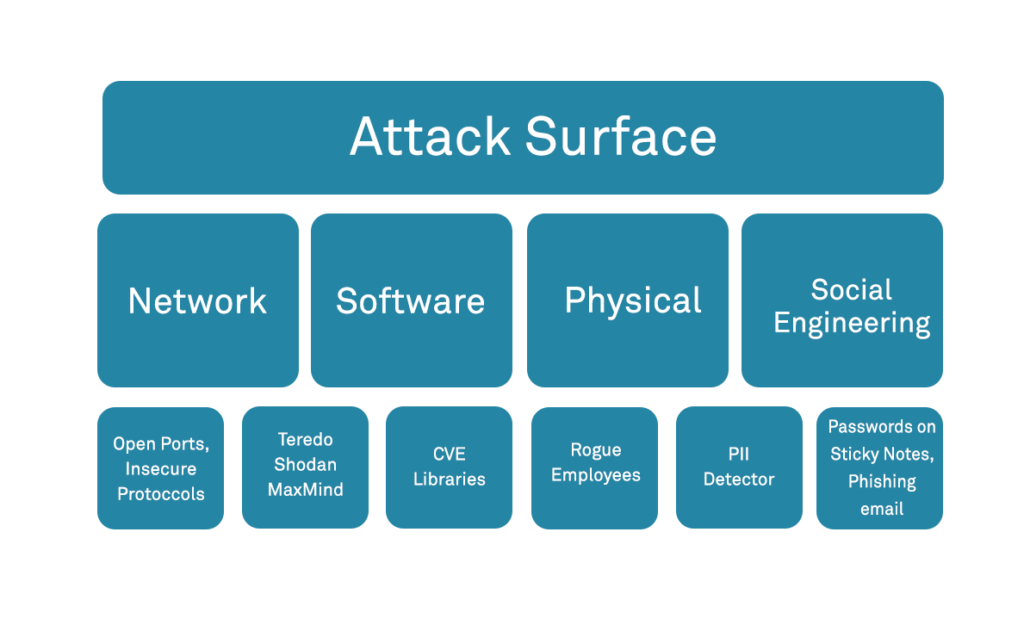

Hardening is often described in the context of computer systems as the reduction of the attack surface of the system in question. The attack surface of a system or network is normally defined as the sum of the different points (“attack vectors”) via which the system is exposed to attack. It can alternatively be viewed as a combination of the ways in which actions that can be performed on a system remotely. This may include several components; from externally facing services to internal components such as an associated database or host operating system.

Intuitively, the more actions available to a user, or the more components accessible through these actions, the more exposed the attack surface. The more exposed the attack surface, the more likely the system could be successfully attacked, and hence the more insecure it is. If we can reduce the attack surface to each component, we can decrease the likelihood of attack and make a system more secure.

What methodologies are used in hardening web servers and other computer systems?

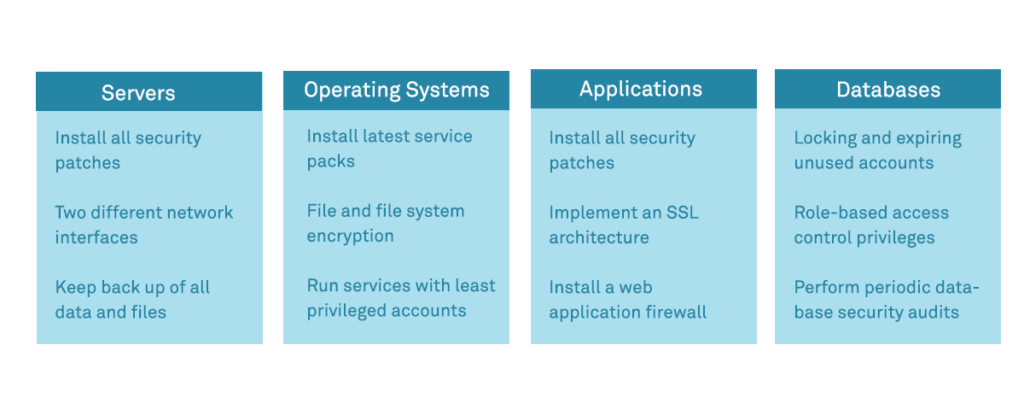

The number of potential ways in which a system can be hardened is extensive, but we provide a summary list of key considerations at a high level below. It is possible to apply the same essential principles to both a host/system configuration at the operating system level, as well as to an application or service configuration at the application level:

- Patching – Perhaps the most important consideration is to ensure that patching is in place and performed regularly. Wherever possible, patching should be automated so that it is applied quickly whenever new patches are released. Operating systems such as Linux generally permit distinction between security and feature patches, meaning that security patches can be set to apply automatically as soon as they become available, without risking feature or upgrade patches being installed that may break or unexpectedly alter functionality or compatibility.

- Principle of Least Privilege – the principle of least privilege essentially means that accounts and services only have access to the resources that they need to deliver on their defined function. The reasoning behind this is that if the account or service is compromised then the attacker doesn’t have the “keys to the kingdom” but is constrained in what damage they can do and resources they can access. At the user level, this generally involves using strong access control based around role based access controls(RBAC), and at the service level it generally means ensuring that a service is run by a system account with restricted privileges rather than (on Linux/Unix) the “root” user for example.

- Password Security – it is important to ensure that all accounts are protected by strong passwords, that default passwords for system accounts are changed to secure ones, and that user accounts consider measures such as Multi Factor Authentication

- Access Vectors – in addition to ensuring that passwords are strong, the context or vector of access for each service or account should be considered and restricted where possible – for example binding of administrative services such as SSH or administrative application-layer paths to sources on the local network only and not the internet

- Mandatory Access Control (MAC) – More recently, Linux distributions have been packaged with MAC solutions such as AppArmor and SELinux. The approaches of these differ somewhat, and their configuration takes some commitment up front, but it permits a tighter security model for system resource access based around the concept of mandatory access control

- Minimise network services – perhaps the most obvious visible footprint in terms of attack surface for a network host or server is the number of open (listening) ports that are running services. Wherever possible, services not needed for the delivery of the host’s essential functional role should be disabled.

- Software removal – Removing unnecessary services and software, particularly network services, ensures that there is less software to maintain, less potential code running that may have vulnerabilities, and less options for attackers to target.

- Boot hygiene – Minimising what boots at start time is also important. Not only is it important to ensure that services that have been shut down during hardening do not simply restart on boot but in general the system should aim to boot to a hardened state.

- Minimise entry points and backdoors – software developers will sometimes leave in “backdoors” for debugging or access during development, but it is important that these are removed or disabled in production code.

- Logging – although not directly a hardening measure, ensuring that significant events – especially access control events – are logged and subject to both monitoring and alerting on anomaly is an important part of ensuring a host’s security.

How do I go about hardening my web servers?

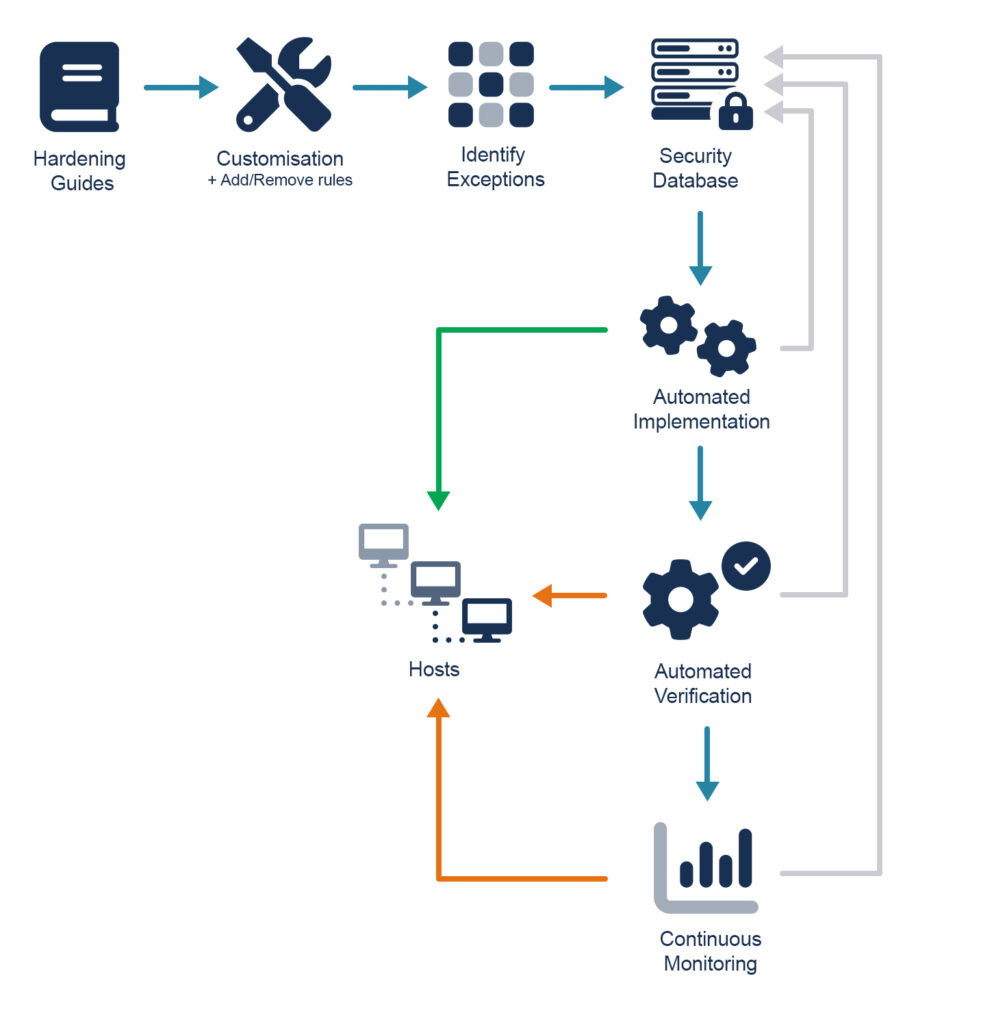

It is possible to go about reviewing system and application configuration manually, however there are generally hundreds of items that can be checked and can be extremely time-consuming and prone to error or inconsistency between systems. It is generally better to make use of an automated method for checking that a system is suitably hardened and actioning any recommendations after careful review.

System auditing scripts and tools include Lynis and Bastille but a number of other tools are also available. In general, most of these tools will be a delivery platform that provides either auditing (checking that a system is hardened and pointing out gaps or potential improvements) or hardening (automatic or guided application of a hardened configuration to a host). The actual hardening provided is generally drawn from one of several high-profile and trusted sets of configuration baselines that are maintained separately and able to be used as open standards for what a secure configuration baseline looks like – two of the more commonly applied standards are the CIS (Centre for Internet Security) Benchmarks, and OpenSCAP (Security Content Automation Protocol).

What other linked considerations are there in managing system hardening?

- Configuration drift – Perhaps the most important consideration is that security or system hardening are not a one-time event. To put it another way, it is one thing to harden a system, but another to ensure that the system remains hardened – servers operate in a dynamic environment and changes made by administrators or others can often weaken or reduce the hardened configuration over time. It is worth considering how this will be managed: potential options include ongoing or continuous auditing on a schedule, or the use of the immutable host paradigm more commonly found in containerized hosts in which containers are deployed in essentially a read-only or immutable state, making their configuration fixed at time of deployment. Tools such as Chef or Ansible can allow configuration enforcement over time.

- Consistency, deployment and Standardisation – If you are managing more than a handful of servers then it is worth considering how you will ensure consistency between hosts as well as make your chosen hardening method scalable. Options would include the maintenance or a hardened “gold image” from which all your servers are provisioned (e.g. a container image, or base virtual machine) or the configuration of a set of hardened configurations or group policies in systems such as Chef or Ansible which are then used for deployment.

- Backups, Redundancy, Dispersion & Capacity Management – although not often considered as part of a core system hardening programme, it is worth considering the “big picture” in terms of hardening, and looking beyond individual host hardening to how the estate as a whole is hardened against potential attack, including ensuring that resources are dispersed and redundant in the event of compromise or failure of any one component or region.

- Off-Host Resource Management – as well as considering the host configuration itself, it is worth giving at least some consideration to other off-host or off-network resources that underpin your service delivery and which may be compromised if not maintained securely. Examples might include the sound management of resources such as domain registration (to protect from domain takeover), SSL certificates and whois records etc.

What drawbacks or dangers are there in system hardening?

System hardening is undoubtably of net benefit to any organisation, but there are nevertheless some factors that need to be kept in mind as considerations:

- Cost, Time and Effort – There is a general rule of thumb in information security that the cost of security measures undertaken should not exceed the total (original/replacement) cost of the item or resource that is to be protected. Setting up a robust system hardening process that is effective on an ongoing basis takes a commitment of time and resources and this should be calculated and considered upfront.

- Useability and functionality – There can often be a conflict between the demands of system hardening and the flexibility of function and useability of a host, which can be restricted. It is therefore very important that every configuration change made during system hardening is well understood before being applied, and the role of the host and its necessary functions carefully considered.

- Unexpected change – in almost all cases, once a system is running operationally, it can be dangerous to alter that running configuration without careful review. Rather than having hardening scripts automatically apply changes to production systems, especially if operating on a continuous or repeat schedule to ensure enforcement, it is generally advisable to instead flag any configuration drift for review manually, and any rectification required performed only after testing and under managed change control processes.

About AppCheck

AppCheck is a software security vendor based in the UK, offering a leading security scanning platform that automates the discovery of security flaws within organisations websites, applications, network, and cloud infrastructure. AppCheck are authorized by the Common Vulnerabilities and Exposures (CVE) Program as a CVE Numbering Authority (CNA)

Additional Information

As always, if you require any more information on this topic or want to see what unexpected vulnerabilities AppCheck can pick up in your website and applications then please get in contact with us: info@localhost