Every year, Verizon Communications Inc, a multinational telecommunications conglomerate, publishes a report known as the Verizon Data Breach Investigations Report. The report compiles data from over 40,000 security incidents within the last 12 months experienced in a range of public and private sector organisations and uses it to analyse and provide insight into the most common threats in the current landscape

The most recent (2019) report suggests that more than 75% of attacks continue to originate with external sources rather than internal disenfranchised employees. Additionally, 40% of data breaches seen targeted vulnerabilities in web applications, with 908 of a total 5,334 incidents resulting in confirmed data disclosure. The statistics are clear – despite increasing maturity of security controls, external web applications continue to be a lucrative route of exploit for attackers.

In an earlier article we have covered the importance of vulnerability scanning and why it remains a powerful tool in your security arsenal – in this article we will examine specifically why it is important to leverage one of the most powerful advantages that dynamic application security testing (DAST) or vulnerability scanning has over manual penetration testing: its ability to be scheduled for frequent or continuous assessment.

Companies who may have experience with penetration testing only in the security testing space can sometimes implement vulnerability scanning on a semi-regular basis, or even just as an annual test. There are very compelling reasons why in doing so, they are missing out on some of the strongest benefits that DAST scanning can deliver, as well as exposing their organisation to unnecessary risk.

In this article, we’ll run through a number of reasons why regular scanning is not just beneficial but essential to delivery on the full potential of DAST scanning, and how regular scanning elevates vulnerability scanning to its full potential, delivering benefits that simply cannot be delivered by penetration testing alone.

We’d argue – “As often as you can, perhaps weekly, and running partial scans every day.” If you’re approaching this article from a background of having performed vulnerability scans or penetration testing perhaps every few quarters, or even just annually, this will seem patently absurd, unmanageable and unnecessary.

But it is an approach based on sound technical underpinnings related to today’s modern web applications, threat landscapes, and development practices. Let’s run through the various reasons why running your vulnerability scans as often as possible maximises the benefits to you, your business, and your customers. Why it is in fact, the correct and only paradigm appropriate for vulnerability scanning in 2020.

The simplest analogy for why regular vulnerability scans should be run regularly is to consider the parallels to physical security of your premises – the process is the same, testing and assurance that preventative measures are continuous and secure and that no breach is in progress or opportunity exists for an attacker. In a physical security environment, you might advise staff to lock doors and close windows out hours, as a policy directive, but you would then employ a security guard to walk the perimeter – to check visually that windows are shut and to rattle door handles to ensure doors are locked.

Now the important question – how often would you expect the security guard to do this? Annually? It starts to seem a little ridiculous to suggest that you only test and verify your operated controls for weaknesses on such a long cycle, doesn’t it. Your premises is in use every day, an attack could come at any time.

The same is no less true for your web applications, in which attackers have access 24 hours a day, and which presents a constantly changing attack surface. The online world moves faster than bricks-and-mortar, not slower, so it makes absolute sense to ensure that your security assurance controls such as vulnerability scanners align with this pace of change.

Let’s take a look at some of the common drivers for performing vulnerability scans more frequently in order to fully leverage their benefits, as well as why this provides less burden on your business’ technical teams, not more:

You may be responsible for purchasing, managing or operating the vulnerability scanning solution, but you (or your team) are not an island. Vulnerability detection is only part of a cohesive vulnerability management life-cycle, and buy-in from other technical teams is absolutely essential if vulnerabilities detected are to be remediated in a timely fashion. The results of a vulnerability scan are only as valuable as the willingness of the IT admin to accept the results and act on them – so make it manageable.

Place yourself in the position of a development or operations team managing a service and ask yourself whether you would prefer to receive:

I would expect most people to vote overwhelmingly for Option 1. Having worked in several enterprise organisations, I have observed first hand how providing fewer findings, more often, prevents frustration and fosters co-operation between teams. “Little and often” makes the vulnerability management process Business As Usual, rather than an extraordinary demand for resources on an irregular basis. Managers and scrum masters can factor in 5% “fix time” per week or Sprint, and vulnerability management becomes part of the status quo. Providing regular vulnerability reports from frequent scans, each with a small delta to the last, helps everyone.

New vulnerabilities in software are published regularly as CVEs. When a new vulnerability is reported, it triggers a race against the clock between the various people involved. From an organisation’s point of view, teams need to roll-out the necessary security patches to rectify the flaw, as soon as the vendor supplies them.

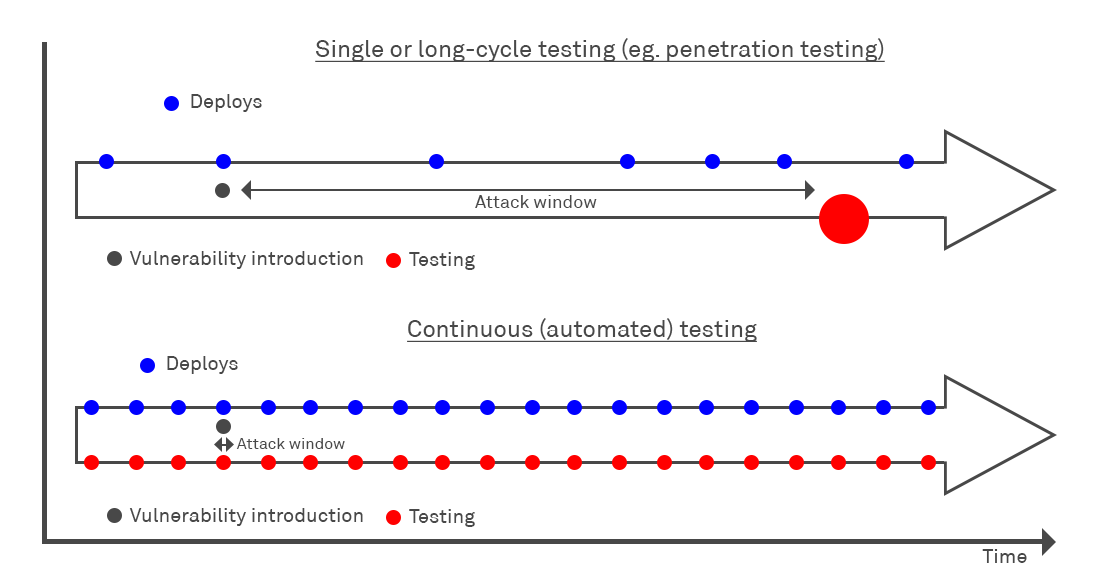

However at the same time, attackers will start developing exploits with malicious code that can take advantage of the identified weaknesses. The race is on, and the period until you patch is known as the “attack window” during which an attacker can take advantage of the vulnerability on your systems.

If you are only performing vulnerability scanning on a long interval or cycle between scans, it may be months before you are even aware that one of your systems is un-patched and vulnerable. Scanning more regular doesn’t find more vulnerabilities or present a greater burden, but it reduces the timescales between a vulnerability being exposed on your system and you becoming aware of it and patching it, tipping the scales in your favour and against the attacker.

Systems development used to be a slow process with long development cycles. However the advent and adoption of devops and Agile practices within organisations often means that development teams are using Continuous Deployment practices and living up to the dream of minimal diffs and multiple deploys per day. This is great news for product development release, however it means that bad code can be released quicker and more frequently too.

The more Agile your development teams become, the more disconnected a legacy “infrequent scan” approach becomes. If you release code regularly, it makes intuitive sense that you scan the application more frequently too.

If you think that scanning an application frequently is not possible, then its worth looking at how a product such as AppCheck can deliver on these requirements. With custom scan profiles to execute only relevant plugins, limited-scope crawls to target only changed paths in your application, an API for scan command & control, and CI integration, it is possible to perform automated, fast and targeted scans in response to code deploys, to partner and complement less frequent – perhaps weekly – deep-dive scans of your entire estate.

Modern web applications are complicated beasts. The use of Content Delivery Networks, proxies, round-robin DNS, load balancers, application routing, Web Application Firewalls and other technologies means that your web application may not always respond in exactly the same manner. Differing responses, timeouts, website bannering and outages, and intermittent behaviours may mean that making only a single scan per year fails to represent typical application behaviour. The more frequently that you scan, the greater assurance you have that you capture applications in a range of typical states, and hence are more likely to spot, and remediate, vulnerabilities.

Most of the reasons outlined in this article relate in some way to code deployment, code errors and code management. However, changes to infrastructure equipment and systems, whether made under change management or not, can have equally disastrous security impact, and are a daily occurrence within many organisations.

Scanning your web infrastructure regularly ensures that any misconfigurations introduced in system or services are detected swiftly.

Sometimes things can change even when they haven’t been changed. What do we mean by that? Well, an organisation may believe that no vulnerability can possibly have been introduced because they haven’t made any change to their service, whether that is their infrastructure (systems) or web application code. However, some resource behaviour can change even where no explicit change action has been performed, allowing vulnerabilities to be introduced: SSL certificates can expire or be revoked, domain registrations can expire, permitting domain takeover, and products can go End of Life (EOL) and cease to receive ongoing critical security updates or advisories. In combination with appropriate asset management techniques, performing scanning regularly ensures a greater chance that these issues are detected early.

Vulnerability scanning typically performs a vulnerability identification function – that is, it seeks to detect vulnerabilities in exposed systems and services that are present due to weaknesses in software code or configuration, but which do not indicate an active ongoing exploit by an attacker, and which can be remediated before such an exploit can occur. However, whilst not being their primary function, vulnerability scans can be a useful secondary control to detect indications of compromise (exploits having already occurred) such as unexpectedly open ports or services on your systems, or potential malware presence that may represent a breach in progress.

Cybercriminals spend an average of 191 days inside a corporate network before they are detected, according to a 2018 IBM research report, and during that time they can attempt to compromise an increasing number of systems and exfiltrate large amounts of data. Faster reaction to breaches limits the potential harm to your organisation and its customers, and scanning more frequently may not only let you spot possible signs of exploit earlier, but performs an additional function in keeping timestamped records that can be referred back to as to exactly when a specific system change was detected as occurring.

We’ve already had a crack at developers and system administrators for introducing vulnerabilities, so we might as well have a pop at marketing and content management teams too. Content Management Systems (CMS) such as WordPress allow non-technical teams to manage web presences such as blogs. However by design many allow the pasting of rich content and links, which are an attractive vector for attackers to seek to introduce vulnerabilities such as Stored Cross Site Scripting (XSS). CMSs can be updated multiple times per week, or even per day – so does it make sense to only scan the application annually?

It has become very common for websites to include third-party client-side JavaScript libraries into their applications. Third-party JavaScript is incredibly time-saving for developers and allows them to leverage common functionality in an easy and standarised manner. Notable examples include the near-ubiquitous jQuery UI and React.js but there are thousands of others providing functionality including visitor tracking.

Many libraries are called by websites from remote servers and contain code that may be managed by an unknown party. Where this is done, the over-burden of trust in these third-party libraries can prove costly where there are code errors such as XSS or sandbox implementation errors, or malicious malware introduction by developers. Because the JavaScript is typically called directly from a CDN server, it has received no review by the organisation but critically the organisation controls only the call, not the content returned, which can change immediately without the organisation having visibility. Frequent vulnerability scanning ensures that this risk is reduced and dangerous third-party JavaScript includes flagged as early as possible.

It is important the vulnerability scanning is performed not only to detect vulnerabilities, but critically that follow-up scanning is also performed to verify and provide assurance on claimed fixes. Investigations following Equifax’s well-publicised data breach of 2017 appears to suggest that Equifax were aware of the requirement to patch their systems against a known and published vulnerability, but that they failed to retest systems in order to ensure that they had been patched correctly – failure that led directly to the theft of sensitive data relating to 145 million people.

If you only perform a scan annually, or quarterly, you are failing to follow up on verification that vulnerabilities discovered in previous scans are being remediated in timescales mandated by organisational policy or in line with risk.

Most teams tasked with vulnerability detection and management will at some point either (a) be asked by their senior management to provide some reporting on trends and metrics on vulnerability detection and remediation rates in terms of burn-down and deltas, or else (b) want to provide these metrics themselves.

The more often your vulnerability scans execute, the more granular your reporting is and the more useful they will prove. If you have one data point per year, your metric granularity is going to be pretty terrible and any trend analysis requested effectively useless and undeliverable.

The bottom line is that without performing regular vulnerability scans, you do not have consistent visibility on your vulnerability landscape and are one step behind the hackers. If you would like more information on how AppCheck are helping businesses across the UK run regular vulnerability assessments please get in touch on info@localhost

AppCheck is a software security vendor based in the UK, offering a leading security scanning platform that automates the discovery of security flaws within organisations websites, applications, network, and cloud infrastructure. AppCheck are authorized by the Common Vulnerabilities and Exposures (CVE) Program as a CVE Numbering Authority (CNA).

As always, if you require any more information on this topic or want to see what unexpected vulnerabilities AppCheck can pick up in your website and applications then please contact us: info@localhost

No software to download or install.

Contact us or call us 0113 887 8380

AppCheck is a software security vendor based in the UK, offering a leading security scanning platform that automates the discovery of security flaws within organisations websites, applications, network and cloud infrastructure. AppCheck are authorised by the Common Vulnerabilities and Exposures (CVE) Program as a CVE Numbering Authority (CNA)